From Complex Planning Tool to Core Primitive

Designing the operating logic for Canvas, AI Mode's artifact-driven surface.

Phase one: Response architecture to multi-turn conversation design

The work started as exploratory research into how AI Mode could support complex, multi-turn user journeys. I joined a cross-functional team tasked with figuring out what a high-quality, multi-step planning experience should look and feel like.

The work quickly grew in scope. I was writing example multi-turn conversations, collaborating with designers on UI elements, and the instructions we crafted to deliver content were producing responses that were genuinely helpful, actionable, and high quality.

Complex planners needed actionable insights

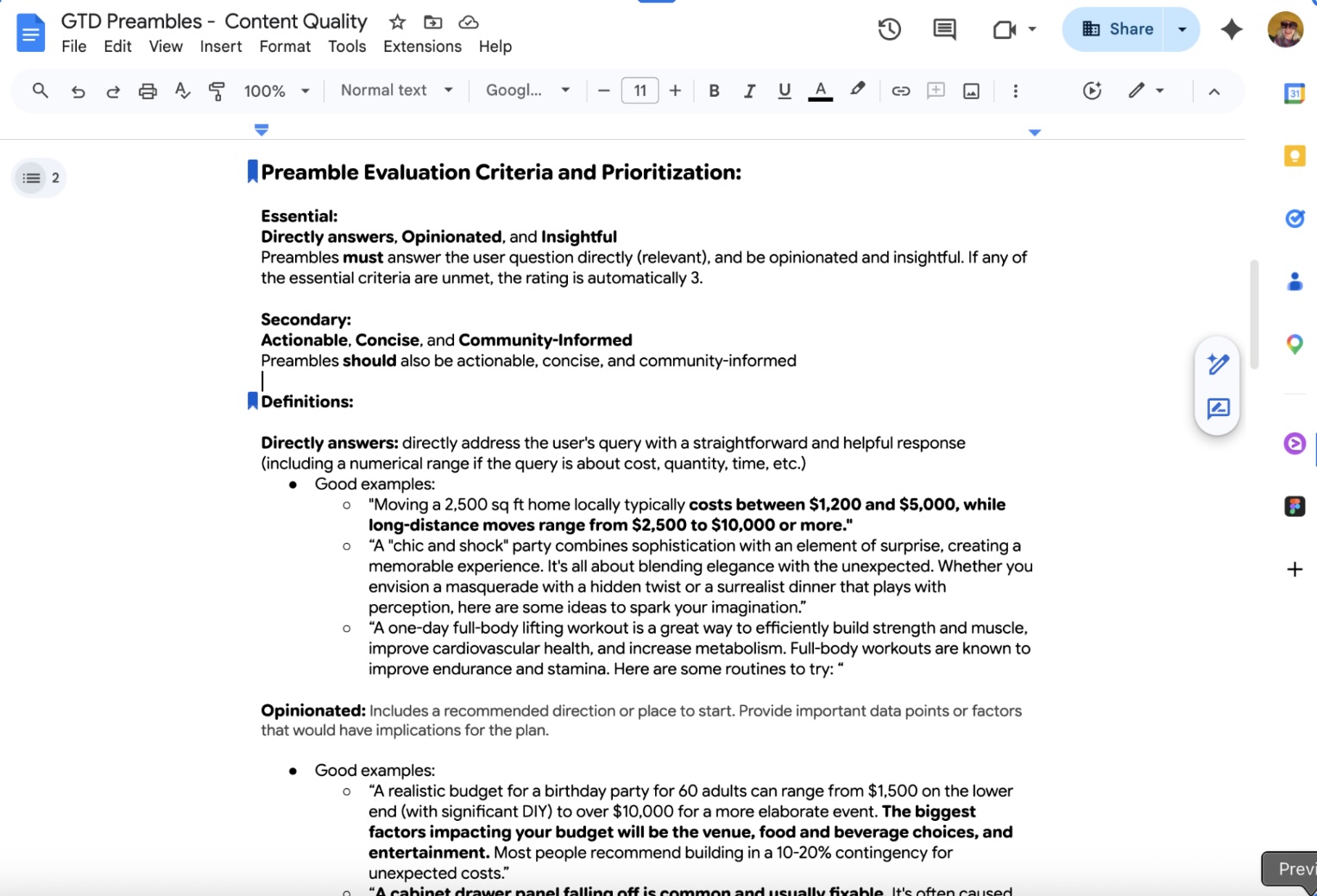

Defining what made responses useful and helpful was a bulk of our early work. Through detailed descriptions and instructions, our responses compressed the planning lifecycle, included insights from people who had been through these journeys before, and ended up delivering high quality responses.

"Writing the conversations you want to exist before you write a single instruction. That's where the real design happens."

Phase two: Canvas

As the responses we were able to generate with our prompting were both visual and actionable, the team was asked to broaden our mandate and build out AI Mode's Canvas product, translating the journeys we'd designed into an artifact-driven experience. This is where the work got really interesting.

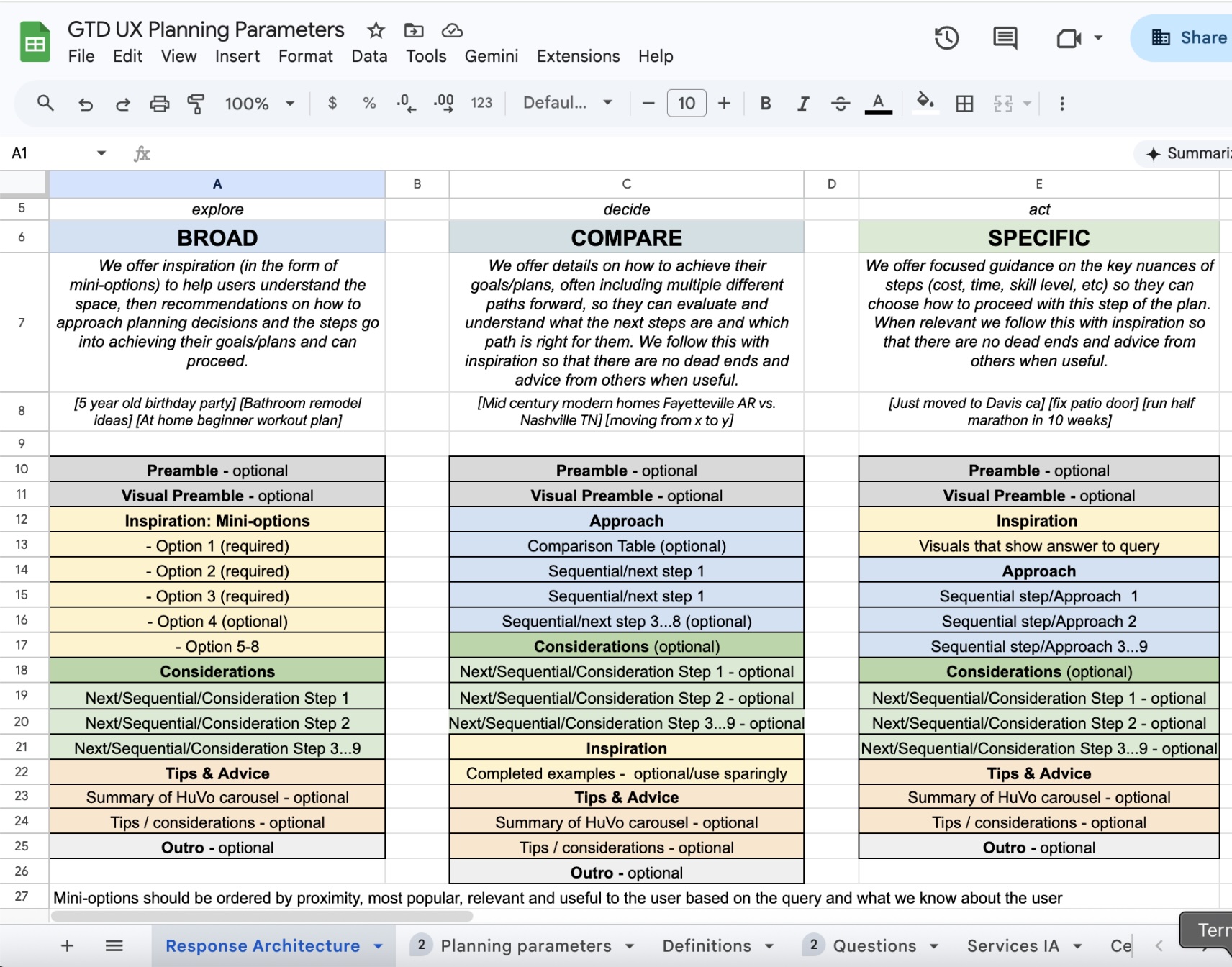

I defined the operating rubric governing the interplay between the chat conversation and the artifact: when and how the model should use tables, maps, and images versus staying in prose. I trained the model on what actionable, insightful, and helpful responses looked like, and wrote the onboarding messaging to bring users from AI Mode into Canvas.

Scaling across Search

My role evolved into a stewardship function, owning the documentation and operating standards required to collaborate with vertical teams across Shopping, Travel, and Education to ensure Canvas worked consistently across all of Search's priority use cases.

Results

Canvas shipped as a core surface in AI Mode, with artifact-driven experiences live across travel and planning verticals. The operating rubric I authored became the shared source of truth for how Search's vertical teams build inside Canvas.

See Canvas in Search →