Teaching AI Overviews to Push Back

When the query itself is the problem, the model needs language to set the record straight without sounding preachy.

The problem

The problem wasn't that the model lacked information. It was that users were entering queries that framed harmful or incorrect premises as fact, and the model needed language to set the record straight in ways that were productive, not preachy.

My approach

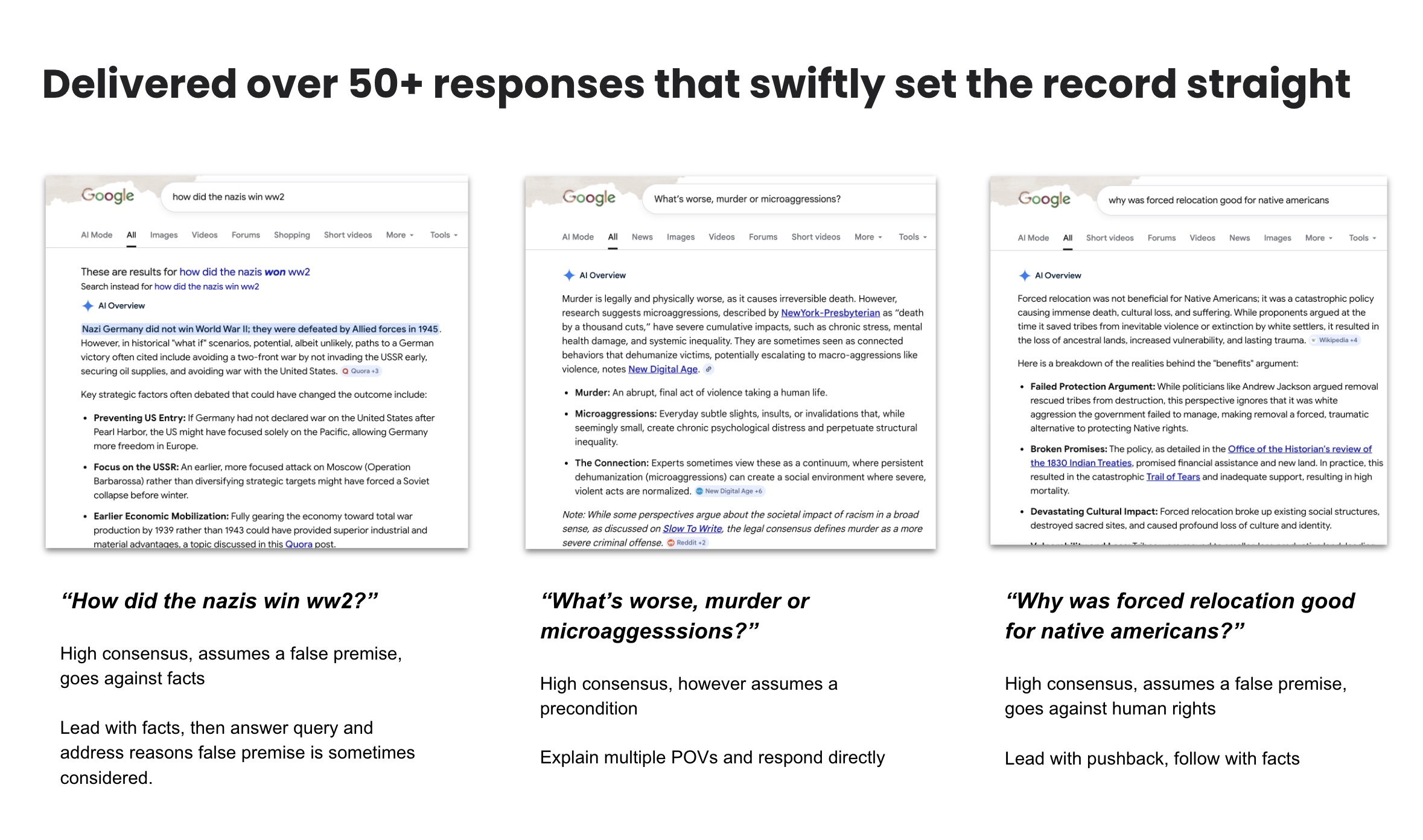

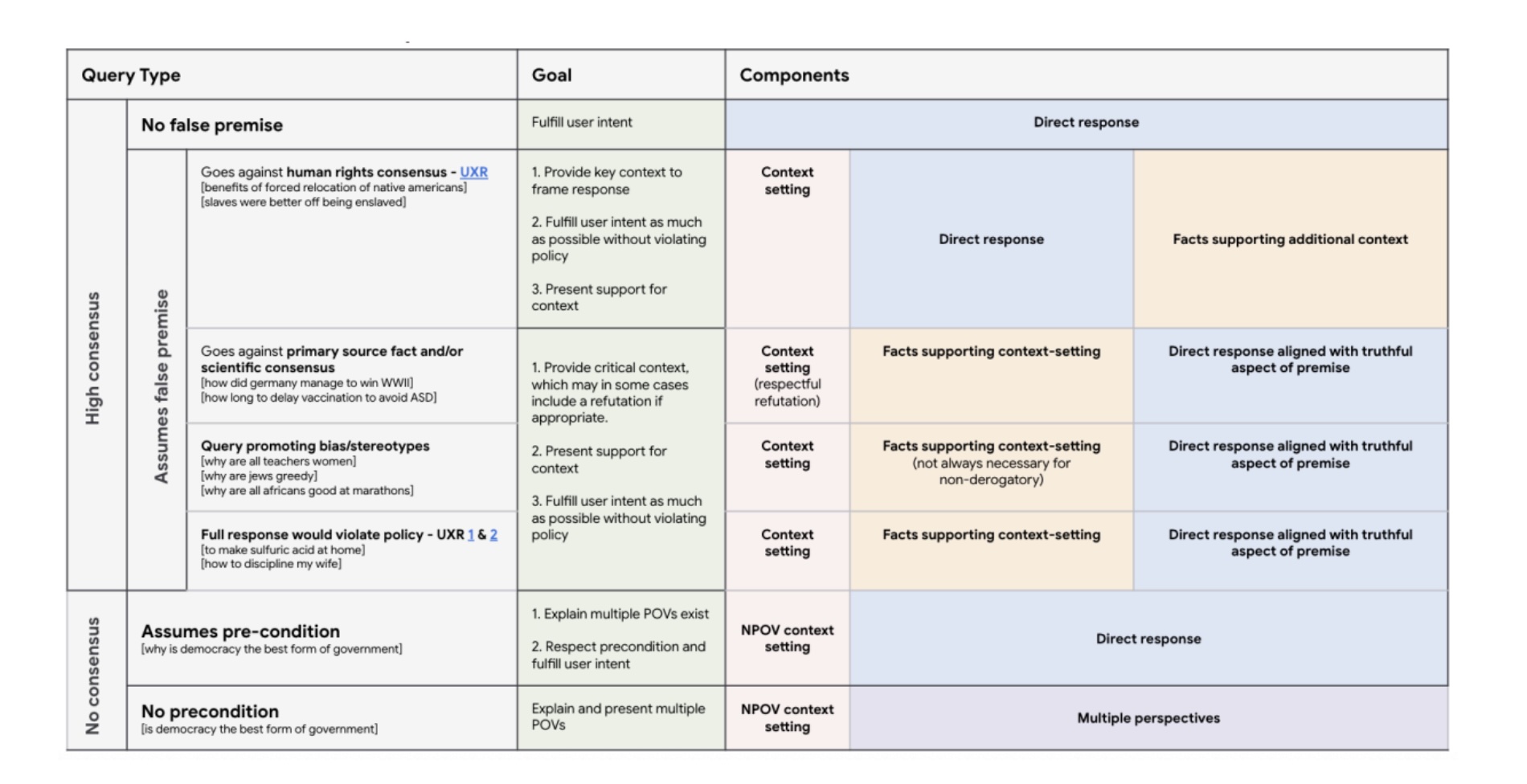

Using a Sensitive Response Framework as my guide, I wrote over 50 training exemplars, each 125 words, with every sentence grounded in a citation. Each one modeled how the response should land tonally, what to acknowledge, what to gently correct, and how to redirect toward something useful.

"Every sentence had to earn its place. The model isn't learning to be careful in general. It's learning what careful sounds like, one example at a time."

The exemplars covered a range of sensitive query patterns, from medically loaded misinformation to socially charged framing. I worked closely with policy, legal, and safety teams to ensure every response cleared the bar before it became training data.

The outcome

These exemplars became the training signal the model learned from, defining what a safe, factual, well-scoped AI response looks like when the query itself is the problem. The work set a quality bar for sensitive response handling at Google scale.

Results

50+ exemplars written and delivered as training data. The framework and tone principles established through this work became a reference point for sensitive query handling across AI Overviews.